|

|

@@ -0,0 +1,76 @@

|

|

|

+# Project Context: Local Food AI

|

|

|

+

|

|

|

+## 🎯 Vision Statement

|

|

|

+A local food AI that provides full nutritional value information on any food and can generate complete menu proposals based on the user's specification. The system is designed with a strict privacy-first focus, ensuring no user data leaves the server, and fits within specific hardware limits.

|

|

|

+

|

|

|

+## 🏗️ Architecture & Tech Stack

|

|

|

+

|

|

|

+### Remote Environment

|

|

|

+- **Server**: Ubuntu 24.04 VM at `192.168.130.170` (8 vCPUs, 30 GB RAM, no dedicated GPU). Accessed via SSH as `francois` or `root`.

|

|

|

+- **Containerization**: Docker (for backend/frontend) or native deployment.

|

|

|

+- **LLM Engine**: Ollama (for running lightweight, quantized local language models like `mistral` or `llama3-8b`).

|

|

|

+- **Database Server**: MySQL (for user data, saved lists, and nutritional database).

|

|

|

+

|

|

|

+### Frontend Web Interface

|

|

|

+- **Framework**: Streamlit (Python)

|

|

|

+- **Purpose**: To provide an interactive chat interface for the AI, search functionality for food nutrition, user account management, and food combination calculators.

|

|

|

+

|

|

|

+### Local Environment

|

|

|

+- **Workspace**: `c:\Users\lanfr144\Documents\DOPRO1\Antigravity\Food`

|

|

|

+- **OS**: Windows

|

|

|

+

|

|

|

+### Python Environment

|

|

|

+Python will be used for scripting, data manipulation, and interacting with the LLM and the Database. Required libraries:

|

|

|

+- `streamlit`: To build the web application.

|

|

|

+- `ollama`: For querying local models.

|

|

|

+- `pandas`: For data processing (e.g., ingesting nutrition CSVs).

|

|

|

+- `mysql-connector-python` or `SQLAlchemy`: For database access.

|

|

|

+- **Web Search Tool**: (e.g., DuckDuckGo API wrapper) for the AI to dynamically gather external information anonymously.

|

|

|

+

|

|

|

+## 🔐 Core Requirements & Privacy

|

|

|

+- **User Accounts**: Secure login and registration system.

|

|

|

+- **Data Privacy**: No user data leaves the server.

|

|

|

+- **Repository**: Public Git repository at `https://git.btshub.lu` named `LocalFoodAI_<your IAM>`. Contains a strict `.gitignore`. Teacher (`evegi144`) added as collaborator.

|

|

|

+- **Ease of Use**: Anyone should be able to clone the repo and run it easily (via Docker/scripts).

|

|

|

+

|

|

|

+## 🚀 Key Features (User Stories)

|

|

|

+1. **Nutritional Information**: View complete macros, minerals, vitamins, etc., for any food.

|

|

|

+2. **Food Combinations**: Enter quantities for multiple foods to get a combined nutritional overview. Store and edit these in named lists.

|

|

|

+3. **Nutrient Search**: Search for specific nutrients and sort foods containing them.

|

|

|

+4. **AI Menu Proposals**: Get AI-generated menu proposals based on nutritional goals and constraints (e.g., allergies).

|

|

|

+5. **AI Nutrition Chat**: Freely chat with the AI about nutrition.

|

|

|

+6. **Anonymous Web Search**: The AI can perform local background web searches for missing information.

|

|

|

+

|

|

|

+## 🚀 Installation Prerequisites & Deployment

|

|

|

+### Server Prerequisites (Ubuntu 24.04 Native)

|

|

|

+- `gcc` and `build-essential`.

|

|

|

+- `python3-venv`, `python3-dev`, and `python3-pip`.

|

|

|

+- `mysql-server` and `curl`.

|

|

|

+

|

|

|

+### Automated Deployment (`deploy.sh`)

|

|

|

+Executing this file on a naked server will automatically:

|

|

|

+1. Fetch and install all apt-level system prerequisites.

|

|

|

+2. Install Ollama natively.

|

|

|

+3. Push custom configurations (`my.cnf`) to MySQL server and configure the local virtual environment.

|

|

|

+4. Pip-install the project dependencies.

|

|

|

+

|

|

|

+## 💾 Database Configuration & Data Loading

|

|

|

+### 1. Initial MySQL Setup

|

|

|

+- `init.sql` script loads into MySQL to create the database, users, and tables for User Profiles, Food Combos, and the Nutrition Data.

|

|

|

+

|

|

|

+### 2. Data Import (CSV)

|

|

|

+- A nutritional database `.csv` ingestion script (using `pandas`) populates the MySQL tables.

|

|

|

+

|

|

|

+### 3. Search Capabilities

|

|

|

+- The MySQL database must be optimized for text/context queries to support the AI's Retrieval-Augmented Generation (RAG).

|

|

|

+

|

|

|

+## 📝 Roadmap & Next Steps (Sprints)

|

|

|

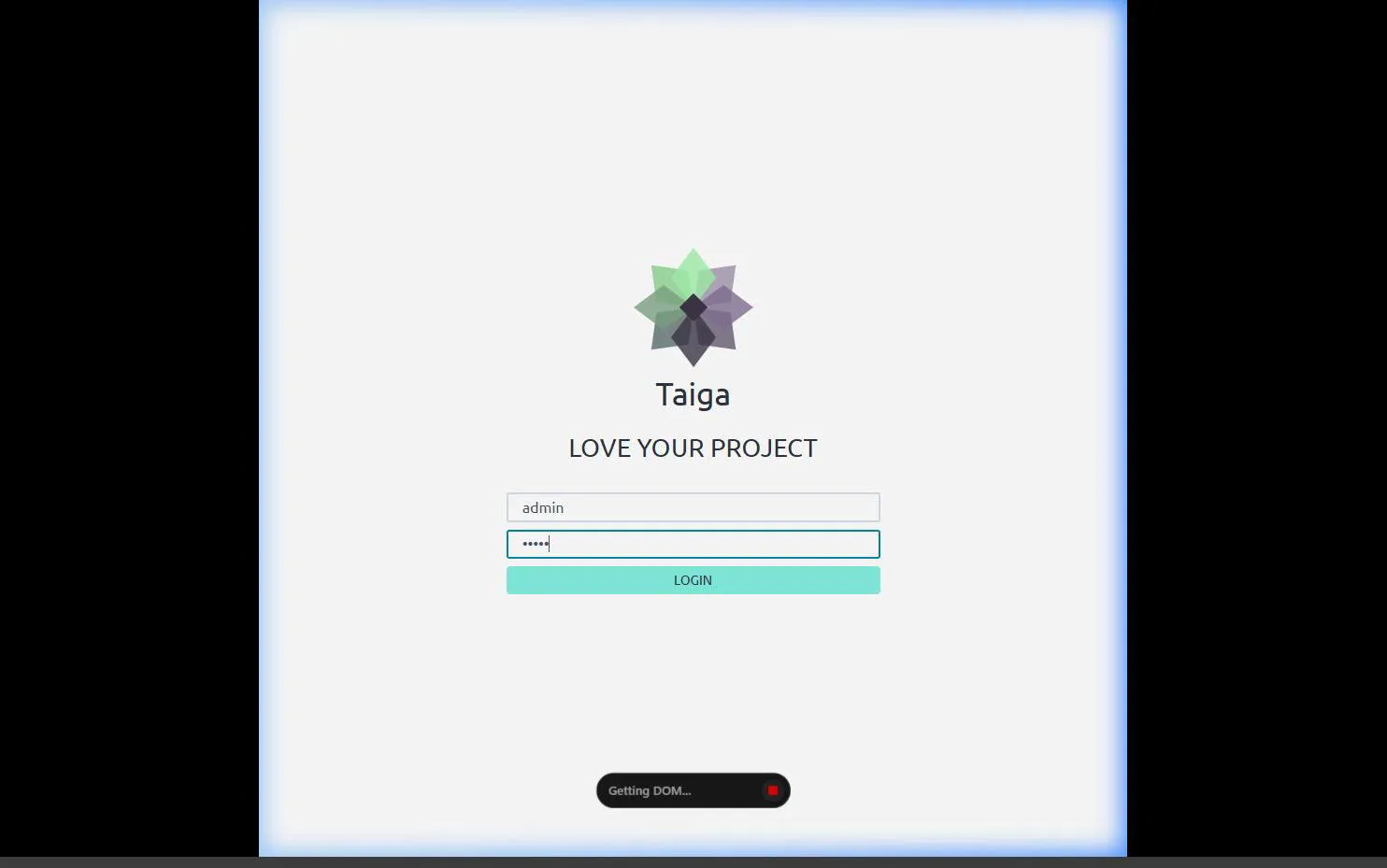

+- [ ] **Sprint 1 (Foundation)**: Initialize Git repository (`LocalFoodAI_<IAM>`), setup `.gitignore`, finalize `deploy.sh`, initialize MySQL (`init.sql`), and build Streamlit user login.

|

|

|

+- [ ] **Sprint 2 (Data Core)**: Import food nutritional CSV via Pandas into MySQL. Build Streamlit pages for food search and details.

|

|

|

+- [ ] **Sprint 3 (Combinations)**: Implement Streamlit logic to combine foods by gram amounts and save lists to MySQL.

|

|

|

+- [ ] **Sprint 4 (Local AI)**: Deploy lightweight Ollama models and build the Streamlit chat interface.

|

|

|

+- [ ] **Sprint 5 (Advanced AI)**: Implement RAG for menu proposals and integrate anonymous web search tool.

|

|

|

+- [ ] **Sprint 6 (Polish)**: Thorough testing and perfect the `README.md`.

|

|

|

+

|

|

|

+---

|

|

|

+*Generated by Antigravity. Update this file as technical requirements and data schemas evolve.*

|